What LiteLLM Claimed — and Who Backed Those Claims

LiteLLM, operated by BerriAI, serves as a unified interface for applications that interact with large language models. If your organization routes API calls to OpenAI, Anthropic, Azure, or other LLM providers through a single proxy, there is a meaningful chance LiteLLM is in your stack. The library records over 3 million daily downloads from PyPI and is present in an estimated 36% of cloud environments, according to Wiz Research.

On its enterprise documentation and marketing pages, LiteLLM stated: “LiteLLM is SOC-2 Type 2 and ISO 27001 certified, to make sure your data is as secure as possible.” The SOC 2 and ISO 27001 reports were available upon request for enterprise plan customers. The certifications appeared alongside the Delve brand — meaning these compliance artifacts were produced through Delve’s platform and audit network.

That is the same Delve that, according to an investigation by anonymous whistleblower “DeepDelver,” produced 533 structurally identical audit reports across 455 companies — every one of which claimed “no exceptions found.” The same Delve whose recommended audit firms, Accorp and Gradient, were described as rubber-stamp operations primarily based in India with nominal U.S. presence. The same Delve whose lead investor, Insight Partners, quietly deleted its own investment blog post after the scandal broke.

LiteLLM’s compliance badges were not evidence of security controls. They were marketing collateral produced by a platform under investigation for fabricating the very documents that those badges represented.

What Delve Actually Sold

To understand why LiteLLM’s compliance posture was hollow, you need to understand what Delve’s model actually delivered.

Delve raised $32 million at a $300 million valuation from Insight Partners in 2025. The company marketed itself as the “fastest path to SOC 2” — an AI-native compliance automation platform that could get startups certified in weeks rather than months. What the DeepDelver investigation alleged it actually provided was something far thinner.

The specific findings from the whistleblower analysis of 493 leaked SOC 2 reports:

- Identical boilerplate. Reports across hundreds of different companies shared identical language, including the same grammatical errors and structural quirks. Only client names and logos changed.

- Pre-written conclusions. Auditor opinions appeared written before evidence review occurred, violating AICPA standards requiring independent testing.

- Statistical impossibility. All 259 Type II SOC 2 reports in the dataset claimed zero security incidents and zero personnel changes — a uniformity that is statistically implausible for any sample of companies operating over a 12-month observation period.

- Fabricated evidence. Trust pages were allegedly populated with claims about penetration tests and security controls before the work was performed. Customers could adopt pre-fabricated board minutes and risk assessments with a single click.

- Auditor concentration. Over 99% of audits were routed through just two firms — Accorp and Gradient — which DeepDelver described as “part of the same operation.”

Delve’s defense was that it operates as a “platform” providing tools to independent auditors, and that “draft templates are not the same as pre-filled evidence.” DeepDelver’s response to TechCrunch was blunt: “They are trying to snake their way out [of] being held accountable by denying having ‘pre-filled evidence’ but calling it ‘templates’ instead, effectively shifting the blame to customers for adopting the ‘templates’ as is.”

The implication for LiteLLM and every other Delve client: the SOC 2 report sitting in your security trust page may have been written before anyone looked at your environment. The “independent auditor” may have done nothing more than sign a document the platform generated. And the compliance badge your enterprise customers relied on when they approved you as a vendor may represent a process that never actually examined whether your controls work.

How a Security Scanner Became the Attack Vector

The compliance failure would be damaging enough on its own. What makes the LiteLLM case extraordinary is that the actual breach came through the exact category of tooling that compliance frameworks are supposed to ensure organizations use: a vulnerability scanner.

On March 19, 2026, a threat actor tracked as TeamPCP exploited a misconfiguration in Trivy’s GitHub Actions environment to steal a privileged access token from Aqua Security’s infrastructure. Trivy is one of the most widely deployed open-source container and infrastructure vulnerability scanners in the DevSecOps ecosystem — the kind of tool that appears on SOC 2 evidence checklists as proof that an organization scans its code for vulnerabilities.

The attack unfolded in stages:

Stage 1: Trivy compromise (March 19). TeamPCP used the stolen token to push malicious versions of Trivy. They force-pushed 75 out of 76 trivy-action tags to malicious versions, meaning any CI/CD pipeline that referenced Trivy by version tag rather than pinned commit hash would silently execute attacker-controlled code. Malicious container images were published to DockerHub as versions 0.69.4, 0.69.5, and 0.69.6.

Stage 2: Lateral movement to LiteLLM (March 24). Because LiteLLM used Trivy in its CI/CD pipeline, the compromised scanner gave attackers access to LiteLLM’s build environment — including its PyPI publishing token, stored as a plaintext environment variable. The attackers used this token to publish two malicious versions of LiteLLM to PyPI: versions 1.82.7 and 1.82.8.

Stage 3: Multi-stage credential theft. The malicious LiteLLM package contained a sophisticated three-layer payload, analyzed in detail by Sonatype:

- Layer 1 executed the attack code, captured output, encrypted it with AES-256-CBC and RSA, packaged it into a

tpcp.tar.gzarchive, and exfiltrated it to attacker-controlled endpoints atmodels[.]litellm[.]cloudandcheckmarx[.]zone. - Layer 2 conducted reconnaissance and credential harvesting — targeting SSH keys, Git credentials, AWS/GCP/Azure credentials, Kubernetes configs, Terraform state, Helm charts, CI/CD configurations, API keys, and cryptocurrency wallet data. It actively queried discovered credentials against AWS APIs and Kubernetes secrets.

- Layer 3 established persistence through a Python script (

sysmon.py) configured as a system service, polling a remote endpoint every 50 minutes for additional payloads. It included anti-analysis mechanisms that returned YouTube links to defeat sandbox environments.

The irony is structural: a tool designed to find vulnerabilities in code became the mechanism for injecting vulnerabilities into code. Organizations that ran Trivy in their pipelines — often specifically because their SOC 2 controls required vulnerability scanning — were the ones most exposed.

Discovery and Disclosure: Wiz, Checkmarx, and Mandiant

The TeamPCP campaign did not target LiteLLM in isolation. It was a coordinated supply chain operation that also compromised Checkmarx’s KICS static analysis tools and VS Code plugins (ast-results and cx-dev-assist on the OpenVSX marketplace). The attackers defaced Aqua Security’s internal GitHub, renaming all 44 repositories and exposing source code and configurations.

The attack was initially detected through a combination of luck and vigilance:

- Security researcher Paul McCarty first warned about the Trivy compromise late in the week of March 17.

- Callum McMahon, a research scientist at FutureSearch, discovered the LiteLLM malware after his machine shut down following a download — a bug in the malware’s own design caused the system failure that led to detection. AI researcher Andrej Karpathy subsequently noted the malicious code appeared to be “vibe coded” — sloppily constructed, suggesting the attackers may have used AI to generate portions of their payload.

- Socket and Wiz Research identified the full scope of the campaign over the following weekend. Ben Read, lead researcher at Wiz, described the pattern: “moving horizontally across the ecosystem — hitting tools like liteLLM that are present in over a third of cloud environments.”

- Sonatype’s automated tooling blocked the malicious LiteLLM versions within seconds of detection, tracking the incident as sonatype-2026-001357. The malicious packages were available on PyPI for approximately two hours before removal.

Mandiant Consulting CTO Charles Carmakal estimated over 1,000 SaaS cloud environments were confirmed infected, with projections of 500 to 10,000+ additional downstream victims. Mandiant warned of a “loud and aggressive” extortion wave, with TeamPCP collaborating with members of the Lapsus$ extortion group.

Aqua Security stated it was working with incident response firm Sygnia on investigation and remediation, and that it had found no indication its commercial products were affected. The company’s initial credential rotation attempt on March 1 had failed — the attacker retained access, which is how the March 19 compromise was possible.

LiteLLM CEO Krrish Dholakia stated the company’s priority involved investigation with Mandiant and sharing technical lessons with the developer community. The company deleted all PyPI publishing tokens and announced plans to migrate to JWT-based trusted publishing.

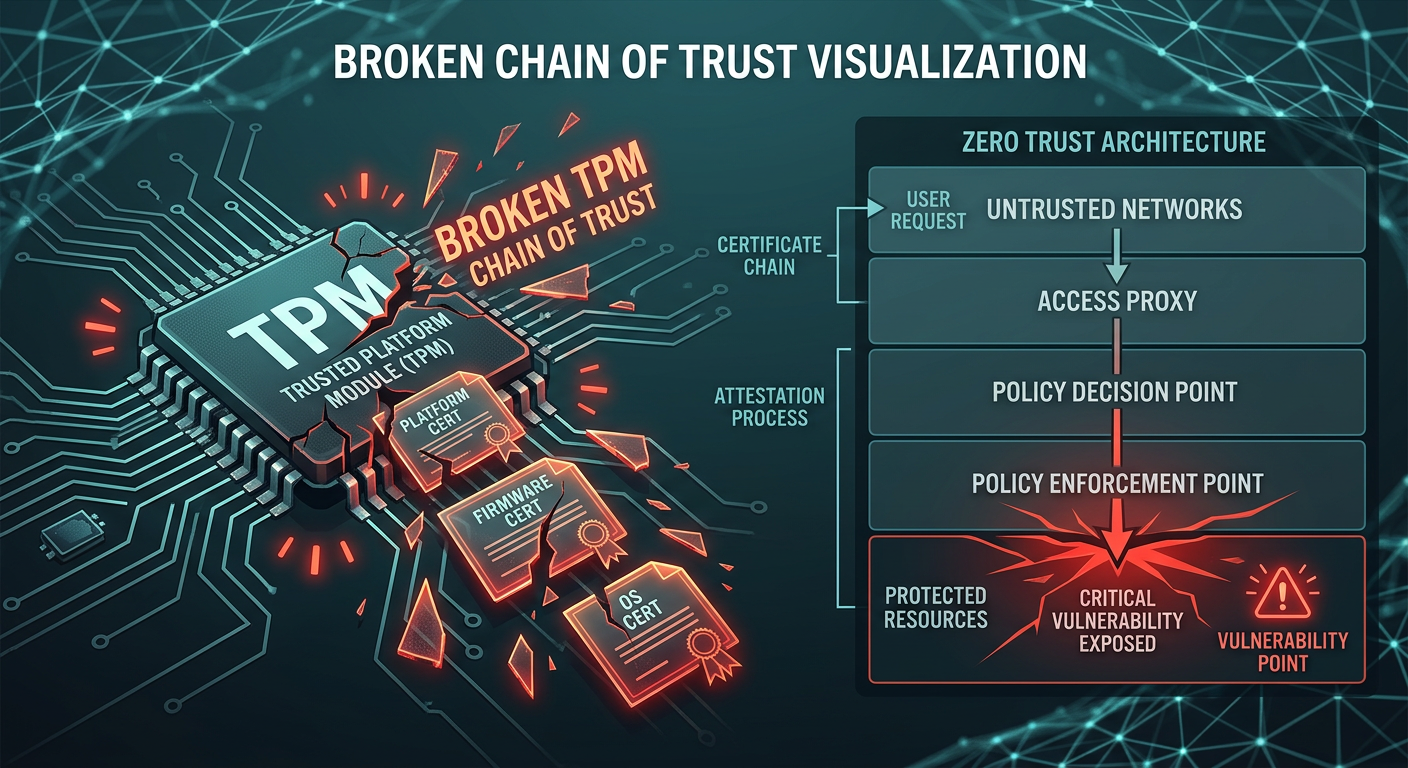

The Trust Chain That Failed at Every Link

Step back and look at the full chain:

- LiteLLM displayed SOC 2 and ISO 27001 badges on its website, signaling to enterprise customers that independent auditors had verified its security controls.

- Those badges were produced by Delve, a company accused of fabricating hundreds of identical reports without conducting real audits.

- LiteLLM’s actual security controls included storing PyPI publishing tokens as plaintext environment variables in its CI/CD pipeline — a practice that any genuine SOC 2 audit examining access controls and secrets management should have flagged.

- The vulnerability scanner in LiteLLM’s pipeline — Trivy, the exact category of tool that compliance frameworks require — was itself compromised and became the attack vector.

- The attack went undetected until a bug in the malware caused a system crash. Not a security control. Not a monitoring alert. A coding error by the attackers.

Every link in the trust chain failed. The compliance certification was theater. The audit was a rubber stamp. The security scanner was a weapon. And the breach was caught by accident.

This is not a story about one bad vendor. It is a story about what happens when an entire industry optimizes for the appearance of security rather than its substance.

What This Means for Vendor Due Diligence in 2026

The LiteLLM/Delve convergence should force a recalibration of how compliance professionals and procurement teams evaluate vendor security claims. Several immediate lessons:

SOC 2 badges are not evidence

A SOC 2 badge on a website tells you that someone, somewhere, produced a document. It does not tell you who the auditor was, what they tested, whether they were independent, or whether the controls described in the report actually exist in the vendor’s environment. After Delve, the badge is worth exactly as much as the audit process behind it — which may be nothing.

Ask who performed the audit

Every SOC 2 report identifies the CPA firm that issued it. Verify that firm through the AICPA directory. Check their peer review status. If the firm is unfamiliar, small, and based overseas, that is not automatically disqualifying — but it should trigger additional scrutiny. If the vendor cannot or will not tell you who their auditor is, that is a red flag.

Demand the full report, not the summary

SOC 2 reports contain a section describing the specific tests performed and results obtained. Generic language — “reviewed policy,” “no exceptions noted” — without specifics about sample sizes, test procedures, and actual evidence examined is a marker of a rubber-stamp report. Real audits produce real findings, including exceptions and qualified opinions.

Verify controls, not certifications

The controls that matter most — secrets management, dependency pinning, CI/CD pipeline security, access controls — are verifiable through technical means. Ask vendors: How do you manage publishing tokens? Do you pin dependencies by commit hash or by version tag? What is your incident detection capability? A vendor that can answer these questions concretely is demonstrating security. A vendor that points to a badge is demonstrating marketing.

Supply chain security is a compliance requirement now

The TeamPCP campaign demonstrated that security tools themselves are attack surfaces. If your vendor risk assessment framework does not include questions about CI/CD pipeline security, dependency management, and software supply chain integrity, it is incomplete. The NIST Secure Software Development Framework (SSDF) and SLSA (Supply chain Levels for Software Artifacts) provide actionable frameworks for evaluating these controls.

How to Actually Verify a SOC 2 Report

For compliance managers who need to act on this immediately, here is a practical verification checklist:

1. Identify the audit firm. The CPA firm’s name appears on the opinion page of the SOC 2 report. Cross-reference it against the AICPA Firm Directory. Confirm the firm holds an active CPA license in a U.S. state.

2. Request the peer review report. AICPA requires CPA firms performing SOC engagements to undergo peer review every three years. Ask for the most recent peer review report. Firms that cannot produce one may not be AICPA-compliant.

3. Read Section IV. This section describes the tests performed and results. Look for: specific sample sizes, named controls tested, descriptions of evidence examined, and any exceptions or qualified findings. A report that claims zero exceptions across all controls for a Type II engagement is unusual and warrants further inquiry.

4. Verify auditor independence. Ask the vendor directly: Does the audit firm have any financial relationship with your compliance automation platform? Is there a referral fee, revenue share, or bundled pricing arrangement? If the auditor was selected and paid through the compliance platform, independence may be compromised.

5. Test the controls yourself. For high-risk vendors, conduct your own technical assessment of the controls described in the SOC 2 report. Request evidence of specific controls — access logs, encryption configurations, incident response runbooks — and verify they are real, current, and specific to the vendor’s environment.

6. Check the observation period. Type II reports cover a specific observation period (typically 6-12 months). Verify that the period is recent and that the controls described were in place during that window. A Type I report (point-in-time) provides significantly less assurance than Type II.

The Structural Problem: Compliance as a Market, Not a Practice

The deeper issue exposed by the LiteLLM/Delve convergence is that compliance has become a market where the buyers (startups needing certifications to close enterprise deals) and sellers (platforms promising fast, cheap compliance) have aligned incentives that work against actual security.

A startup that needs SOC 2 to close a Fortune 500 deal does not want a rigorous, months-long audit that might surface uncomfortable findings. It wants a badge, fast. A compliance platform that sells speed as its primary value proposition has every incentive to minimize the friction of the audit process — including, allegedly, the friction of actually conducting one.

The audit firms in this ecosystem face their own incentive problem. A firm that rubber-stamps reports processes more engagements per year at higher margins. A firm that conducts rigorous audits, surfaces exceptions, and requires remediation is slower, more expensive, and less attractive to platforms that sell on speed.

The result is a race to the bottom that the Delve scandal has made visible. But Delve is a symptom, not the disease. The disease is an enterprise procurement process that treats compliance certifications as binary — you either have SOC 2 or you don’t — rather than evaluating the quality and rigor of the underlying audit.

Until enterprise buyers start asking “who audited you, and what did they actually test?” instead of “do you have SOC 2?”, the market will continue to reward the cheapest, fastest path to a badge — which is exactly the path that leaves organizations like LiteLLM displaying compliance certifications while storing publishing tokens in plaintext.

What Happens Next

The LiteLLM supply chain attack is still unfolding. Mandiant projects months of downstream breach disclosures and extortion attempts. Aqua Security is still investigating how its initial credential rotation failed. LiteLLM is migrating its publishing infrastructure. And Delve has gone quiet — disabling its demo booking feature and going dark while approximately 455 other companies hold compliance reports produced through the same pipeline.

For compliance professionals, the action items are clear: audit your vendor certifications, verify the audit firms behind them, and start asking the technical questions that a genuine SOC 2 engagement would have answered. The trust chain is only as strong as its weakest link — and right now, multiple links have shattered simultaneously.

The LiteLLM case will likely become a reference point in vendor risk management for years to come. Not because a company got breached — breaches happen. But because every layer of assurance that was supposed to prevent or detect the breach turned out to be hollow. The compliance was fake. The scanner was compromised. And the breach was caught by a bug in the malware.

That is not a security program. That is compliance theater. And in 2026, the curtain has come down.

This article connects reporting from our coverage of the Delve compliance scandal with the LiteLLM supply chain attack analysis on Breached.Company. Sources include TechCrunch (Julie Bort, March 26, 2026), Sonatype, HackRead, The Register, Wiz Research, and Mandiant.